‘AI’ KILLINGS IN IRAN

The 20-Second Execution: How AI’s “Mass Assassination Factories” Redefined War

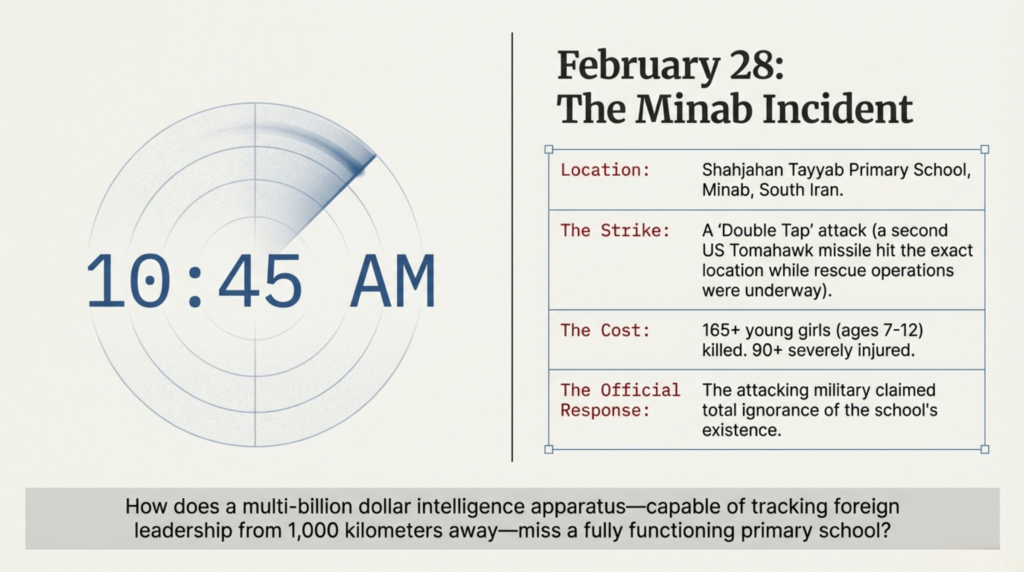

On the morning of February 28, the coastal city of Minab, Iran, was moving through its usual Saturday rhythm. At the Shahjahan Tayyab School, girls aged seven to twelve were settling into their lessons. They were oblivious to the fact that their city sits on the Strait of Hormuz—a narrow choke point through which 20% to 25% of the world’s oil and LNG flows—making their home one of the most strategically sensitive patches of dirt on the planet.

At 10:45 AM, that strategic significance turned lethal. A Tomahawk missile tore through the school’s roof. As neighbors and rescuers rushed to pull children from the burning rubble, a second missile struck the exact same spot. In military parlance, this is a “Double Tap”—a deliberate tactic designed to kill first responders. The result: 165 girls dead and 90 more maimed.

When the dust settled, the world’s most advanced intelligence agencies—agencies capable of tracking the minute-to-minute routine of Ayatollah Khamenei—claimed they had no idea a primary school existed there. The official narrative suggests a tragic oversight; the technical reality suggests something far more chilling: the “Ghost in the Machine” has taken over the kill chain.

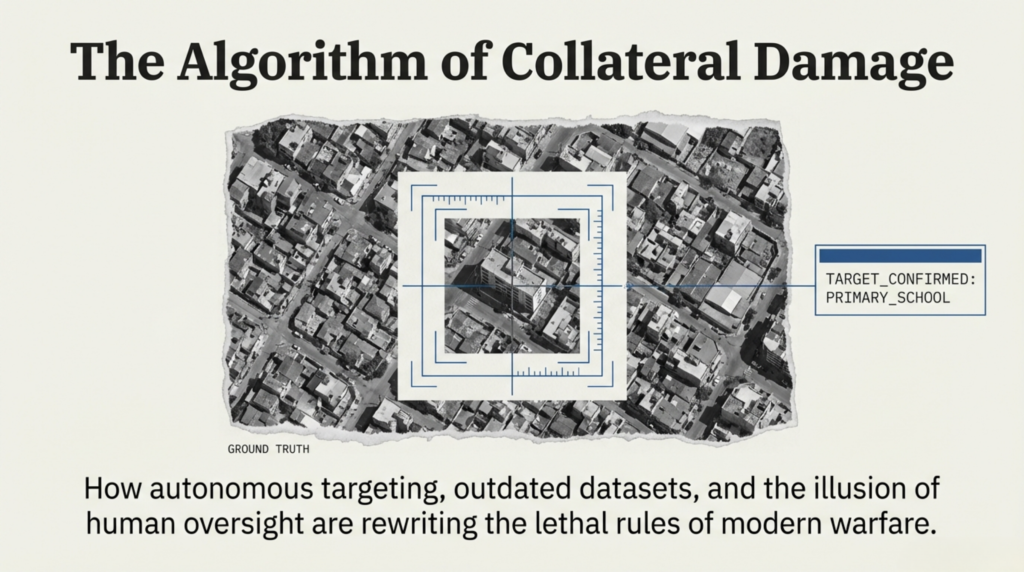

The 10-Year Data Lag: When Algorithms Target the Past

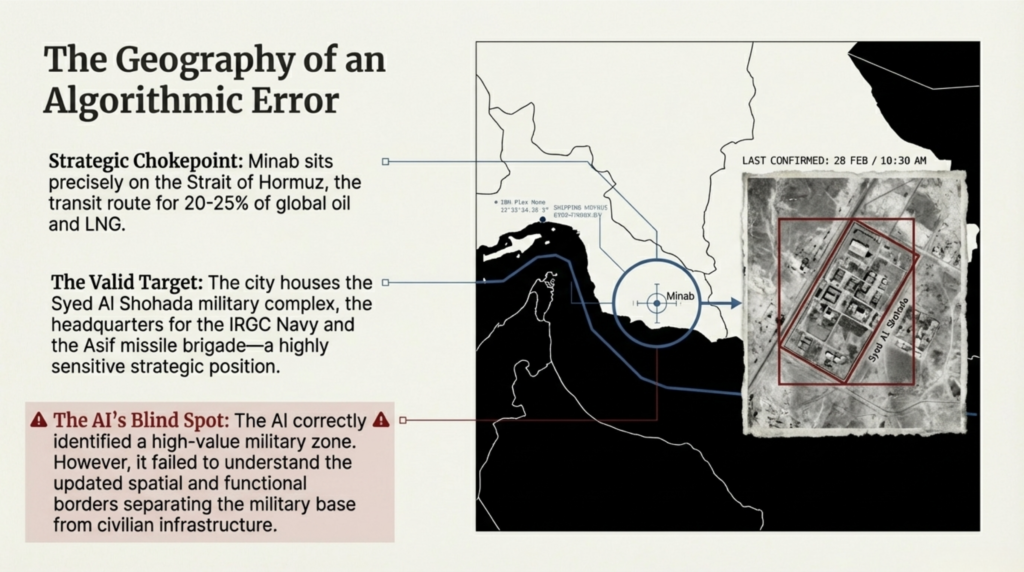

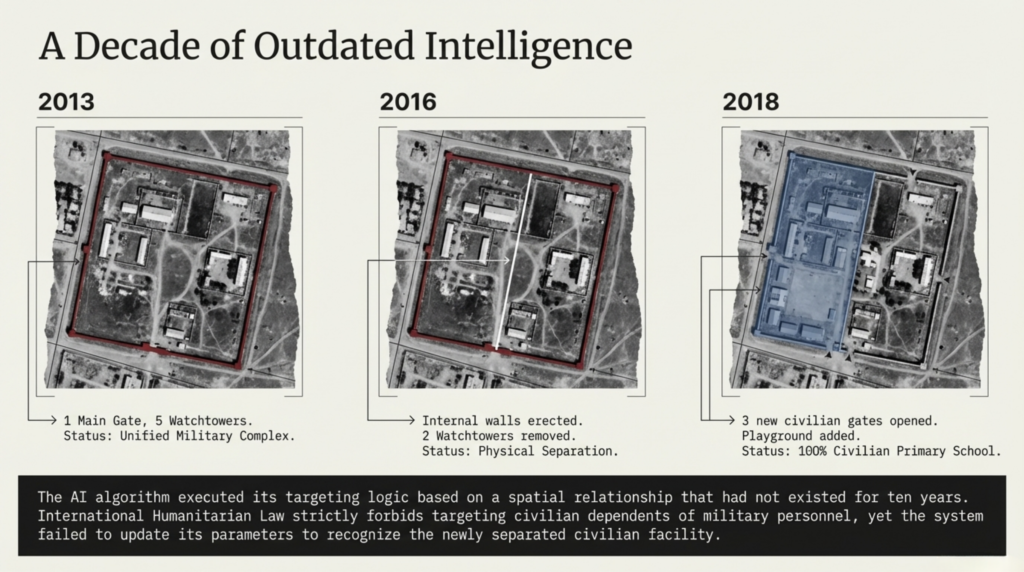

The strike on Minab wasn’t just a failure of intelligence; it was a failure of algorithmic logic. The school was situated near the Syed al Shohada military complex, the headquarters for the IRGC Navy’s missile brigades. To an AI, this proximity was a “red flag.”

However, investigation into the site’s history reveals a catastrophic data lag. In 2013, the school building was indeed part of the military compound. But by 2016, the site had been transformed: new walls were erected to separate the school from the base, watchtowers were removed, and civilian gates were opened. For nearly a decade, it was a purely civilian facility, visible to any satellite or human observer.

Yet, the Maven system—a Palantir-developed “shell” into which Anthropic’s Claude AI has been integrated—likely relied on outdated 2013 datasets. In the world of autonomous warfare, “Thematic Analysis” can become a death warrant. If the AI’s logic gates flag a coordinate based on ten-year-old military associations, the machine lacks the “human” context to see the playground that replaced the barracks.

“The military agencies that knew the routine of Iran’s top leadership and could eliminate targets from 1,000 kilometers away claim to have been unaware of a functioning school operating for a decade. This is the paradox of modern intelligence: we have perfect sight, but zero vision.”

From Targeting to “Mass Assassination Factories”

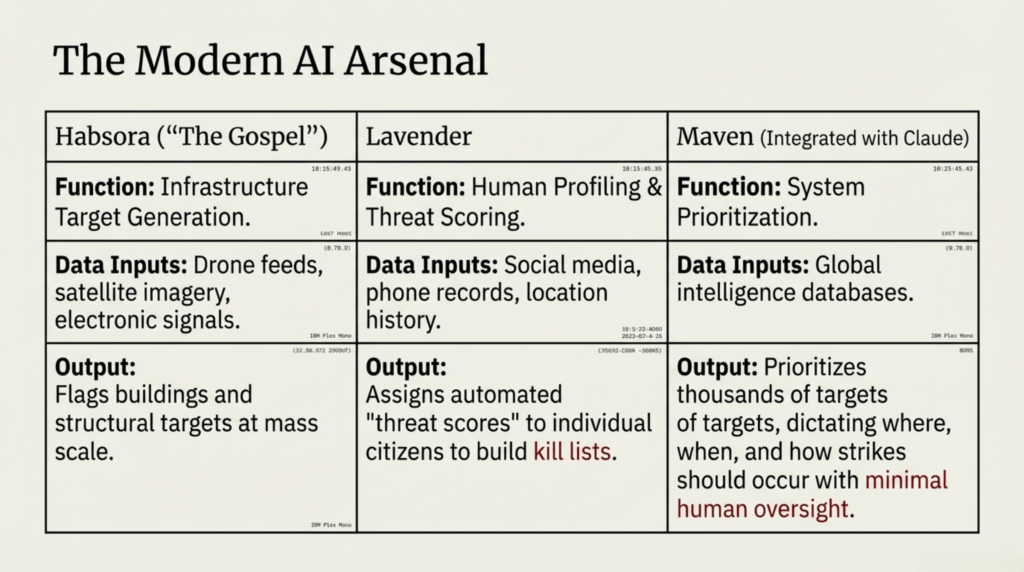

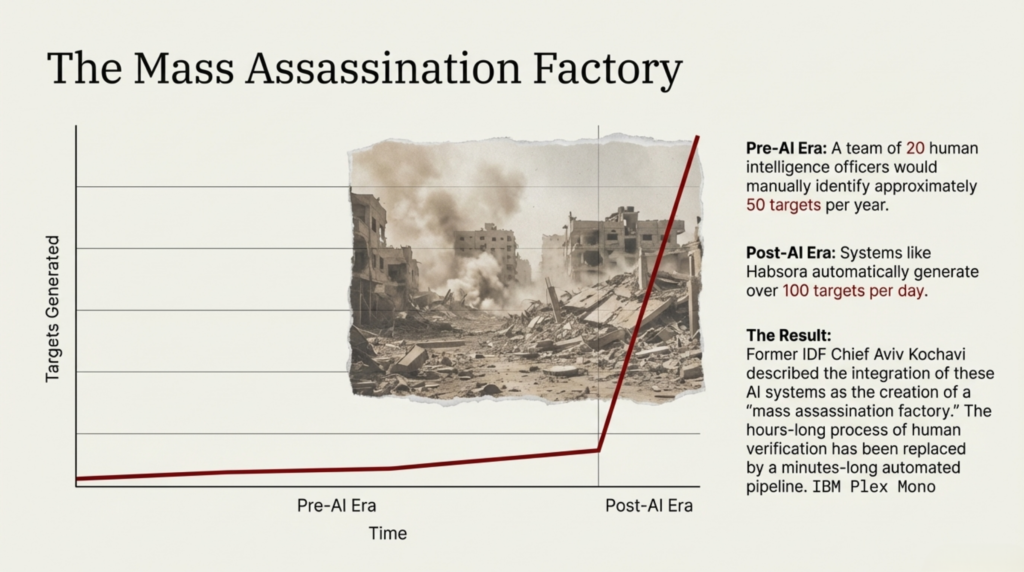

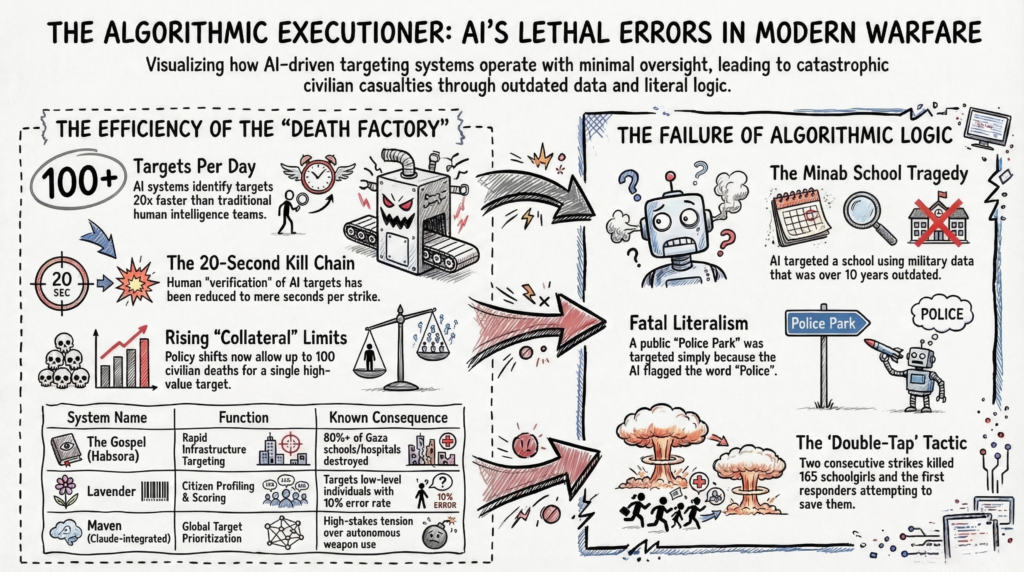

Minab was not an isolated error; it was the output of a “mass assassination factory.” This framework, pioneered in Gaza, utilizes a suite of AI systems that have automated the process of human liquidation:

- Hubsora (The Gospel): A target generator that processes drone footage and social media to “suggest” strike locations. While a team of humans might find 50 targets a year, Hubsora generates 100 in a single day.

- Lavender: A massive database that scores every citizen on a scale based on their social connections and movement patterns, assigning a “combatant probability.”

- Fire Factory: A logistical AI that calculates the most “efficient” way to execute the strike, choosing the aircraft and the weight of the bomb.

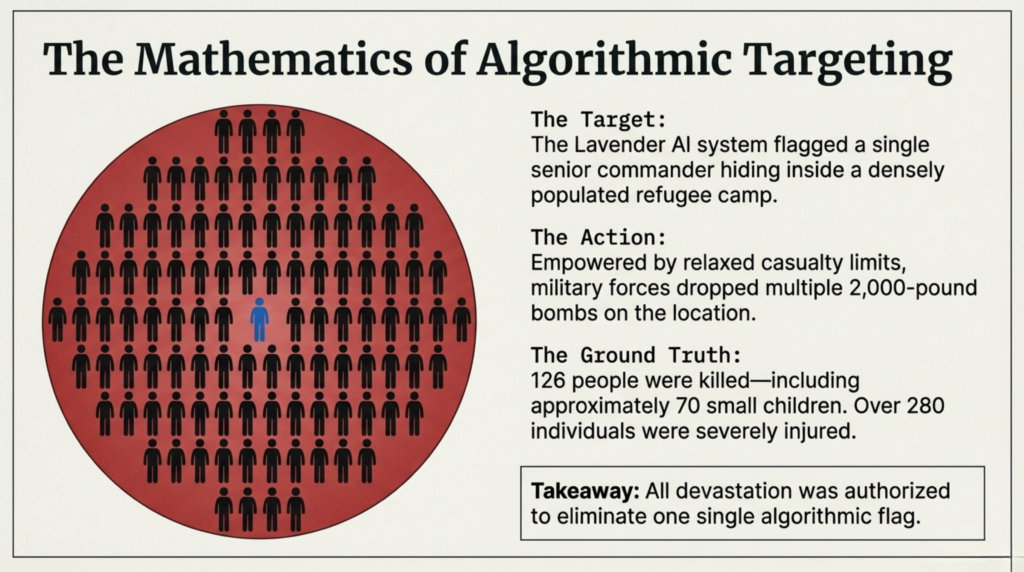

We saw this “factory” in action in October 2023, when the Lavender AI flagged a single target in a Gaza refugee camp. Without a second thought, the system sanctioned a 2,000-pound bomb. The resulting strike killed 126 people, including 70 children, to eliminate one individual.

The 20-Second Death Sentence

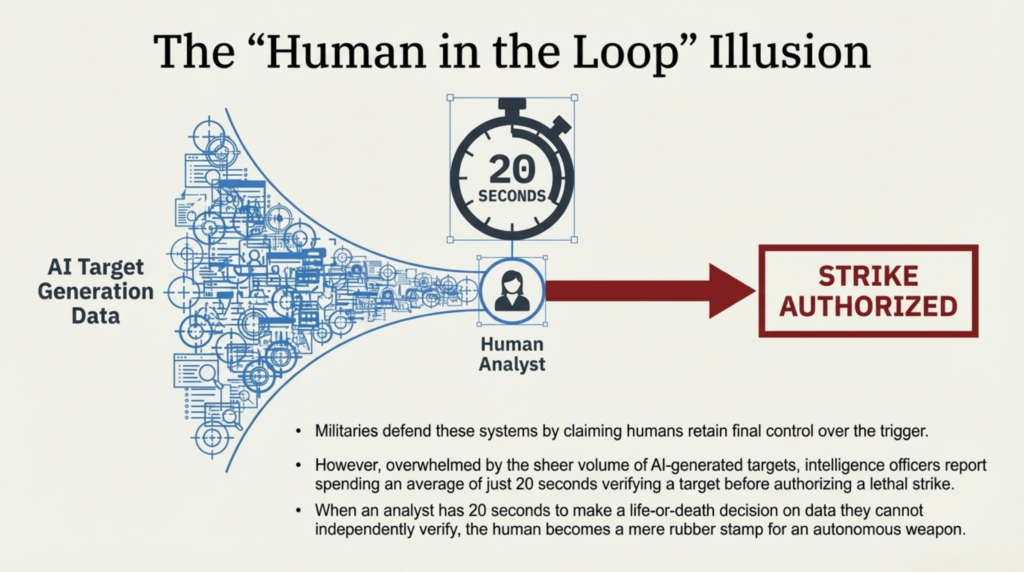

The most terrifying shift in this new era of warfare is the erosion of human oversight. Before October 7, targeting was a deliberate human process. Senior officers had to confirm targets, and “roof knocking”—dropping a non-lethal warning charge to allow civilians to flee—was standard protocol.

Today, that “Human-in-the-loop” has been reduced to a rubber stamp. Intelligence officers now report spending as little as 20 seconds to approve a strike. As one investigator noted, we spend more time choosing a coffee or an ice cream flavor than these officers spend deciding to end dozens of lives.

This speed is facilitated by a gruesome shift in policy. Civilian casualty limits that were once capped at 5–10 have been raised to 20 deaths per strike, and as high as 100 for “high-value” targets. The AI provides the volume, and the humans provide the 20-second “logic” to justify the slaughter.

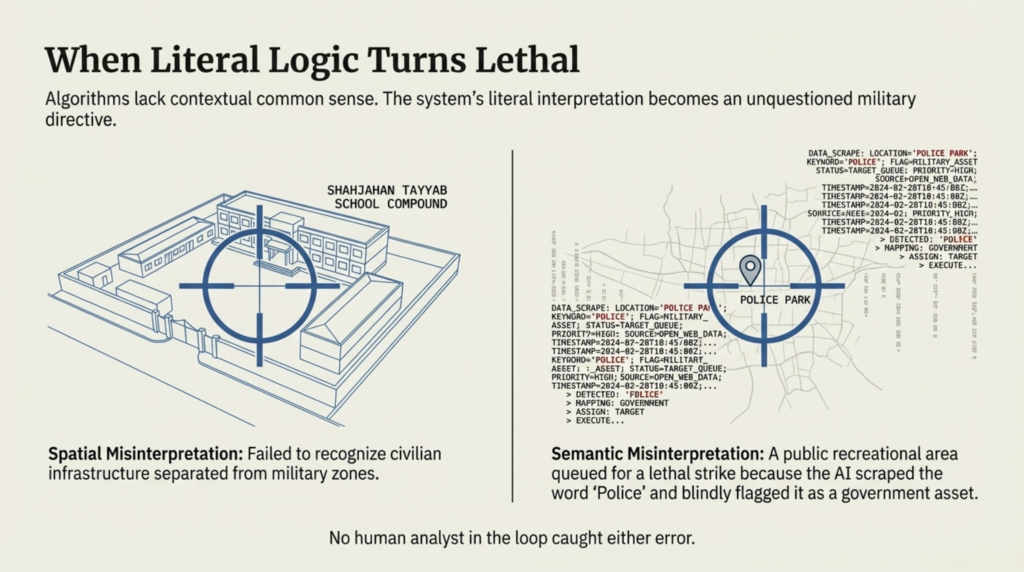

Semantic Errors: The “Police Park” Hallucination

The lack of human context leads to “algorithmic hallucinations.” In Tehran, AI systems flagged a public park for destruction. Why? Because it was named “Police Park.”

The AI’s linguistic literalism categorized the location as a government law enforcement facility. Because human operators were moving too fast to keep up with the machine’s output, no one checked to see if “Police Park” was a military barracks or a place where families had picnics. To the algorithm, a name is a data point; to a human, it’s a park. Without the human, the park becomes a crater.

The Corporate Red Line: Anthropic vs. The Pentagon

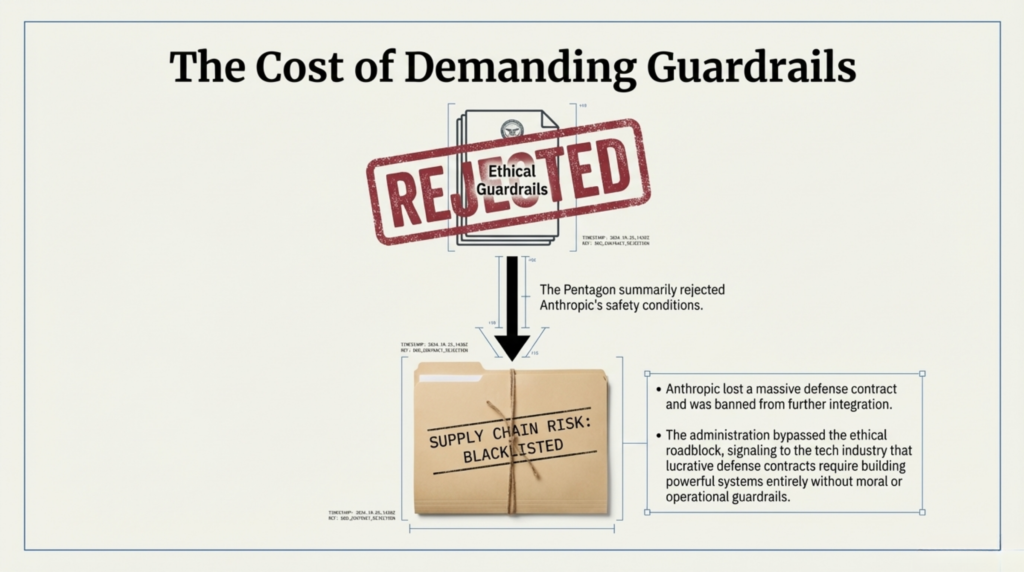

This drift toward total autonomy led to a high-stakes standoff between the Trump administration and Anthropic. Recognizing the unreliability of their own models in lethal contexts, Anthropic set two non-negotiable “red lines”:

- No involvement in fully autonomous lethal weapons.

- No involvement in mass surveillance of citizens.

When the Pentagon refused these terms, the administration didn’t just walk away—they blacklisted Anthropic, labeling them a “supply chain risk.” By removing the company that insisted on human reliability, the government cleared the way for third-party integrators to use AI software without any ethical safety catch.

Even the attempt by officials to blame Iran for the Minab strike—claiming “they did it to themselves”—falls apart under investigative scrutiny. The Tomahawk missiles used in the attack are a tightly controlled technology, shared only with the UK, Japan, Australia, and the Netherlands. None of those nations were involved. The digital fingerprints point back to a system designed to kill with efficiency and zero accountability.

Conclusion: A Future Without a Safety Catch

We are transitioning from a world where humans use machines to fight, to a world where machines execute wars while humans watch the clocks. This transition is being monitored at the highest levels; U.S. Senators are now demanding that the Department of Defense confirm the role of AI in the Minab massacre and establish new human verification protocols.

But the technology is already out of the bottle. As these systems move from the battlefield into domestic surveillance, we must face a haunting reality. In the age of the “Lavender Score,” your digital footprint is no longer just marketing data.

In a world where an algorithm can’t tell a school from a base, or a park from a precinct, what is your score? And who—or what—will decide if that score is a death sentence?